You’ve heard the buzz about speech-to-text AI transforming customer service, but what does it actually do for your business? Many UK small business owners recognise the potential but lack clarity on how the technology works, its real-world accuracy, and where it fits into daily operations. This guide cuts through the confusion, explaining how speech-to-text AI converts spoken language into text, what accuracy you can expect with British accents and noisy environments, and how to choose solutions that enhance customer service whilst protecting data under GDPR.

Table of Contents

- Key takeaways

- How speech-to-text AI works: the technology behind the transformation

- Accuracy and limitations of speech-to-text AI for UK small businesses

- Practical benefits and use cases of speech-to-text AI for UK small businesses

- Choosing the right speech-to-text AI solution for your UK small business

- Explore AI solutions tailored for UK small business success

- Frequently asked questions about speech-to-text AI for small businesses

Key Takeaways

| Point | Details |

|---|---|

| How it works | Speech to text converts audio into text using waveform analysis, spectrograms and neural networks to recognise spoken language. |

| Accuracy varies | Word error rates vary with background noise, accents and jargon, and real world conditions often reduce accuracy. |

| UK SME benefits | UK small businesses can gain improved customer service through automation and reduced operating costs. |

| GDPR compliant | Choosing models that are GDPR compliant and capable of handling data securely is essential for UK SMEs. |

How speech-to-text AI works: the technology behind the transformation

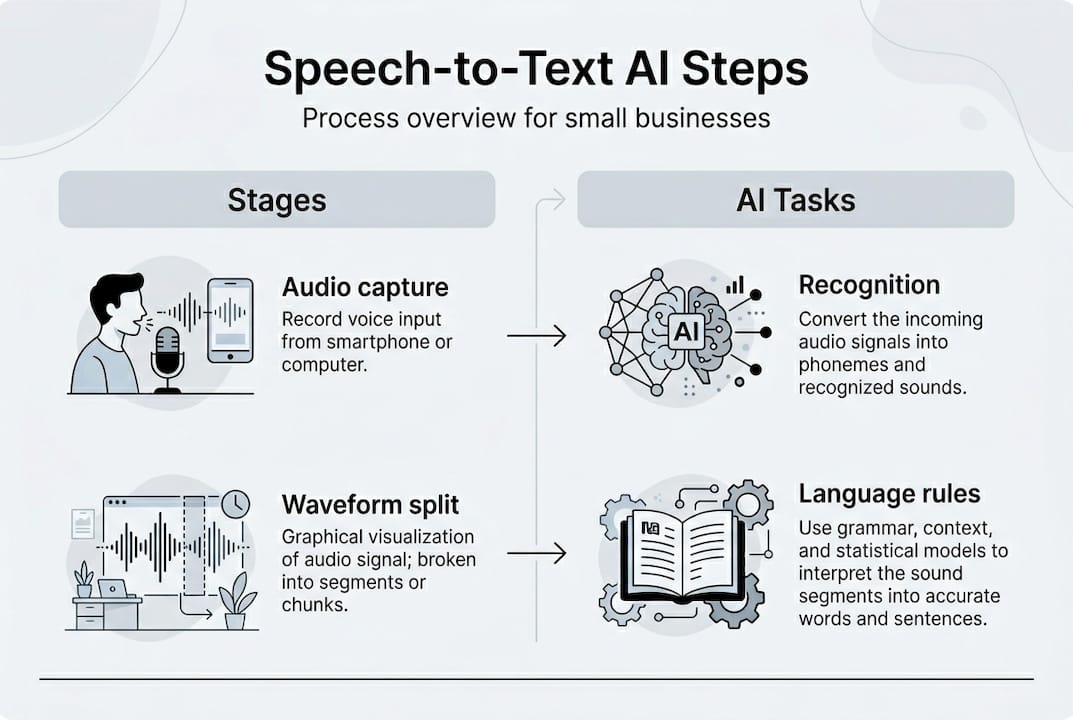

Understanding the technical process helps you evaluate vendors and set realistic expectations. When someone speaks into a microphone, the audio signal gets digitised into a waveform that computers can analyse. The system then converts this waveform into a spectrogram, a visual representation showing how sound frequencies change over time. This spectrogram becomes the input for sophisticated neural networks trained to recognise speech patterns.

The AI breaks down audio into tiny sequential frames, each lasting about 25 milliseconds. Within each frame, the neural network identifies phonemes, the smallest units of sound in language. English contains approximately 44 phonemes, and the model must distinguish between similar sounds like “th” in “think” versus “this.” Core methodologies include acoustic modelling with Transformers and language modelling that predicts which words make sense in context.

Natural language processing applies grammatical rules and contextual understanding to improve accuracy. If the acoustic model hears sounds that could be “their,” “there,” or “they’re,” the language model examines surrounding words to select the correct spelling. Modern end-to-end systems like OpenAI’s Whisper map audio directly to text without separate phoneme stages, using massive training datasets to learn patterns.

Processing stages breakdown:

| Stage | Process | Output |

|---|---|---|

| Audio capture | Microphone records speech, digitises waveform | Digital audio file |

| Feature extraction | Converts audio to spectrogram showing frequency patterns | Visual sound representation |

| Acoustic modelling | Neural network identifies phonemes in sequential frames | Phoneme sequence |

| Language modelling | Applies grammar and context to predict words | Raw text transcription |

| Post-processing | Adds punctuation, capitalisation, formatting | Final formatted text |

Pro Tip: Vendors who clearly explain their acoustic and language modelling approach demonstrate technical competence worth trusting with your business audio.

This multi-stage process happens in milliseconds for real-time applications. When exploring understanding AI voice bots for customer service, you’re essentially deploying this speech-to-text foundation combined with response generation. The quality of transcription directly impacts how well the bot understands customer enquiries.

Accuracy and limitations of speech-to-text AI for UK small businesses

Word error rate measures accuracy by calculating the percentage of words transcribed incorrectly. A WER of 5% means five words wrong per hundred, which sounds minor until you consider a 200-word customer call could contain ten errors, potentially missing critical details like appointment times or contact information. Top models achieve error rates from 2.3% to 15.85%, but real-world conditions often push performance lower.

Several factors degrade accuracy in business settings. Background noise from busy offices, traffic, or multiple conversations confuses the acoustic model, sometimes tripling error rates. Regional UK accents present challenges because most AI models train predominantly on neutral English voices. A strong Glaswegian or Welsh accent might produce significantly more errors than received pronunciation. Industry-specific jargon and technical terminology also cause problems, as the language model lacks context for specialised vocabulary.

Common accuracy challenges for UK small businesses:

- Background noise from open-plan offices, retail environments, or outdoor locations interfering with audio clarity

- Regional accents including Scottish, Welsh, Northern Irish, and strong regional English variations reducing recognition accuracy

- Industry jargon and technical terms not included in the model’s training vocabulary causing misrecognition

- Multiple speakers talking simultaneously or interrupting each other creating overlapping audio the system cannot separate

- Poor audio quality from low-end microphones, mobile phones, or compressed telephony connections losing sound detail

- Fast speech, mumbling, or unclear articulation making phoneme identification difficult for the acoustic model

Systems struggle with accents, jargon, and multi-speaker overlap, impacting service quality when transcription errors lead to misunderstood customer requests. Understanding these limitations helps you set appropriate expectations and design workflows that compensate.

Model performance comparison:

| Model | Clean audio WER | Noisy audio WER | Accent robustness | Best use case |

|---|---|---|---|---|

| Whisper Large | 2.3% | 8-12% | Good | General business transcription |

| Google Cloud | 4.1% | 10-15% | Excellent | Multi-accent customer service |

| Azure Speech | 3.8% | 9-14% | Very good | Enterprise integration |

| AWS Transcribe | 5.2% | 12-18% | Good | Cost-sensitive applications |

Pro Tip: Request trial access and test models with your actual business audio, including difficult accents and background noise levels you encounter daily, before committing to a vendor.

Hybrid approaches combining AI transcription with human review deliver the best results for critical applications. The AI handles the bulk transcription work quickly and cheaply, then human editors correct errors in sections flagged as low confidence. This workflow balances speed, cost, and accuracy. When exploring AI uses in SMEs, consider where perfect accuracy matters versus where 90-95% suffices. AI speech recognition limitations remain real, but thoughtful implementation works around them.

Practical benefits and use cases of speech-to-text AI for UK small businesses

The technology delivers measurable operational improvements when applied strategically. UK businesses have reduced call handling costs by up to 85% and achieved 60-80% of enquiries handled automatically through speech-to-text powered AI receptionists. These aren’t hypothetical gains but documented results from businesses similar to yours.

Customer service teams benefit enormously from automatic call transcription. Every phone conversation gets converted to searchable text, creating a permanent record for quality assurance, training, and dispute resolution. Managers can review transcripts rather than listening to hours of recordings, identifying service issues and coaching opportunities faster. The transcripts also feed into analytics systems that spot trending customer concerns or frequently asked questions.

Key business benefits:

- Dramatic cost reduction by automating routine enquiry handling that previously required human staff time and attention

- Faster customer response times with AI systems answering calls instantly rather than customers waiting in queues

- Automatic documentation of all customer interactions creating searchable records without manual note-taking

- Improved accessibility for deaf or hard-of-hearing customers who can read real-time transcriptions of conversations

- Multi-language support enabling small teams to serve diverse customer bases through automatic translation workflows

- Enhanced training quality using real conversation transcripts as teaching materials for new staff members

UK sectors apply the technology creatively. Hospitality businesses use speech-to-text AI to handle booking enquiries, converting phone requests into calendar entries automatically. Medical practices benefit significantly, with NHS trusts reporting substantial time savings as doctors dictate notes that appear as formatted text instantly. Legal firms transcribe client consultations, creating accurate records whilst maintaining GDPR compliance through encrypted processing.

Bilingual businesses serving both English and Welsh speakers, or English and other community languages, deploy speech-to-text AI that detects language automatically and transcribes accordingly. This eliminates the need for separate phone lines or dedicated bilingual staff for every shift. The technology integrates with translation services to provide responses in the customer’s preferred language.

Impact snapshot: Businesses implementing speech-to-text AI report 85% cost reduction in call handling and 60-80% automation of routine enquiries, freeing staff for complex customer needs.

GDPR compliance remains critical for UK deployment. Ensure your chosen solution processes audio data securely, provides clear data retention policies, and allows you to delete customer recordings on request. British English accent support matters because models trained primarily on American voices perform poorly with regional UK speech patterns. When exploring voice AI in hospitality or AI receptionist solutions, verify the vendor explicitly supports British accents and complies with UK data protection requirements.

Integration with existing business systems multiplies value. Speech-to-text AI that connects to your CRM automatically logs call summaries, updates customer records, and triggers follow-up tasks. Integration with telephony systems enables features like voicemail transcription delivered via email, so you read messages faster than listening. These connections transform speech-to-text from a standalone tool into a productivity multiplier across your entire operation.

Choosing the right speech-to-text AI solution for your UK small business

Selection requires balancing technical capability, compliance requirements, and practical business fit. Following a structured evaluation process prevents costly mistakes and ensures the solution actually improves operations rather than creating new headaches.

Key selection criteria:

- GDPR compliance with explicit UK data protection certifications, clear data processing agreements, and transparent retention policies

- British English accent robustness verified through testing with actual regional accents your business encounters regularly

- Model accuracy specifications including published WER benchmarks for both clean and noisy audio conditions

- Vendor support quality including UK-based assistance, clear documentation, and responsive technical help when issues arise

- Integration capabilities with your existing CRM, telephony, and business software through APIs or pre-built connectors

- Pricing transparency covering all costs including per-minute transcription fees, monthly minimums, and premium feature charges

Cloud-based processing offers advantages and drawbacks compared to on-device alternatives. Cloud systems access the latest AI models and handle unlimited transcription volume without local hardware investment. However, they introduce latency as audio travels to remote servers, require constant internet connectivity, and send potentially sensitive customer conversations to third-party infrastructure. On-device processing keeps data local, works offline, and eliminates per-minute fees, but requires more powerful hardware and may use older, less accurate models.

Cloud versus on-device comparison:

- Cloud processing provides access to cutting-edge models, unlimited capacity, and automatic updates without local maintenance

- Cloud introduces latency, ongoing per-minute costs, and requires trusting third parties with customer audio data

- On-device processing ensures data privacy, eliminates recurring fees, and works without internet connectivity

- On-device requires upfront hardware investment, uses potentially less accurate models, and needs manual updates

Fine-tuning models with your industry vocabulary improves accuracy for specialised terms. If you frequently discuss specific product names, technical specifications, or industry jargon, providing training examples helps the AI learn these terms. However, excessive fine-tuning on narrow vocabulary can reduce performance on general speech, a phenomenon called overfitting. Most UK small businesses achieve better results using robust general models rather than attempting custom training.

Testing remains essential before commitment. Request trial access and evaluate the system with real business audio, not sanitised demo recordings. Include samples with background noise, various accents, and typical conversation scenarios. Measure actual WER on your audio, not vendor-published benchmarks. Ask vendors about hybrid human-AI workflows if perfect accuracy matters for certain use cases.

When reviewing AI voice bot considerations, remember speech-to-text forms the foundation. Poor transcription quality undermines the entire system, causing the bot to misunderstand requests and frustrate customers. Examining AI case studies from similar UK businesses reveals which vendors deliver reliable performance in real-world conditions. Understanding ChatGPT technology overview helps contextualise how modern AI language models power both transcription and response generation.

Pro Tip: Ask vendors directly about their human-AI hybrid options and secure data handling procedures, as transparency on these points signals trustworthy partners.

Explore AI solutions tailored for UK small business success

Applying speech-to-text AI effectively requires understanding both the technology and your specific business context. AIM Agency specialises in helping UK small businesses integrate AI agents that leverage speech-to-text capabilities to enhance customer service whilst maintaining the natural, professional tone your clients expect. Our AI receptionists handle calls 24/7, respond to frequently asked questions accurately, and book qualified sales appointments without human intervention.

Exploring AI agent development services reveals how custom solutions address your unique operational challenges, from handling regional accent variations to integrating with your existing business software. Our AI call handling services demonstrate practical applications of speech-to-text technology that reduce costs whilst improving customer experience. AI receptionist solutions show how small teams can deliver enterprise-level availability and responsiveness.

Pro Tip: Contact AI specialists early in your evaluation process to discuss how speech-to-text solutions can be tailored to your business needs, pronunciation nuances, and integration requirements before committing to generic platforms.

Frequently asked questions about speech-to-text AI for small businesses

What kind of accuracy can I expect with speech-to-text AI in noisy environments?

Expect word error rates between 9-18% in moderately noisy conditions like busy offices or retail spaces, compared to 2-5% in quiet settings. Background noise, multiple speakers, and poor audio quality can triple error rates, so investing in quality microphones and testing with your actual environment proves essential.

Is speech-to-text AI compliant with UK data protection laws like GDPR?

Compliance depends entirely on your vendor choice and implementation. Select providers offering explicit GDPR certifications, data processing agreements, and UK or EU-based data storage. Ensure you can delete customer recordings on request and maintain clear records of how audio data gets processed and retained.

Can speech-to-text AI handle different UK accents, such as Welsh or Scottish?

Capability varies significantly between models. Systems trained predominantly on American or neutral English voices struggle with strong regional UK accents, producing higher error rates. Test prospective solutions with actual speakers representing the accents your business encounters, and prioritise vendors explicitly supporting British English variations.

How does speech-to-text AI integrate with existing business software like CRM systems?

Most modern solutions offer API connections or pre-built integrations with popular CRM platforms, enabling automatic logging of call transcripts, customer record updates, and task creation. Integration quality varies, so verify specific compatibility with your existing software stack and request demonstrations of the actual workflow before committing.

What are the cost implications of deploying speech-to-text AI for a small business?

Cloud solutions typically charge per minute of transcription, ranging from £0.01 to £0.05 per minute depending on features and volume. A business transcribing 1,000 minutes monthly might spend £10-50 plus any platform fees. On-device solutions require upfront hardware investment but eliminate recurring per-minute costs, making them economical for high-volume users. Calculate your expected monthly transcription volume and compare total cost across pricing models.

Recommended

- Understand AI voice bots to boost your UK small business – AI Management Agency

- What is an AI agent? A guide for UK small businesses 2026 – AI Management Agency

- What is an AI answering service? UK small business guide 2026 – AI Management Agency

- AI automation examples boosting UK small business efficiency – AI Management Agency